Search engines used to be blind. They measured signals around your content because they couldn't evaluate the content itself. Now they can. That single shift explains almost every change in SEO over the past three years.

This page is about how Google ranks pages now that AI does the evaluation. Getting cited by AI answer engines like ChatGPT, Gemini, Claude, and Perplexity is a related but different topic, covered in the AI Mentions section.

Most SEO advice makes more sense once you understand why the rules changed.

Not just "what works now," but the mechanical reason behind the shift.

It comes down to one thing: search algorithms evolved from systems that couldn't read your content to systems that can.

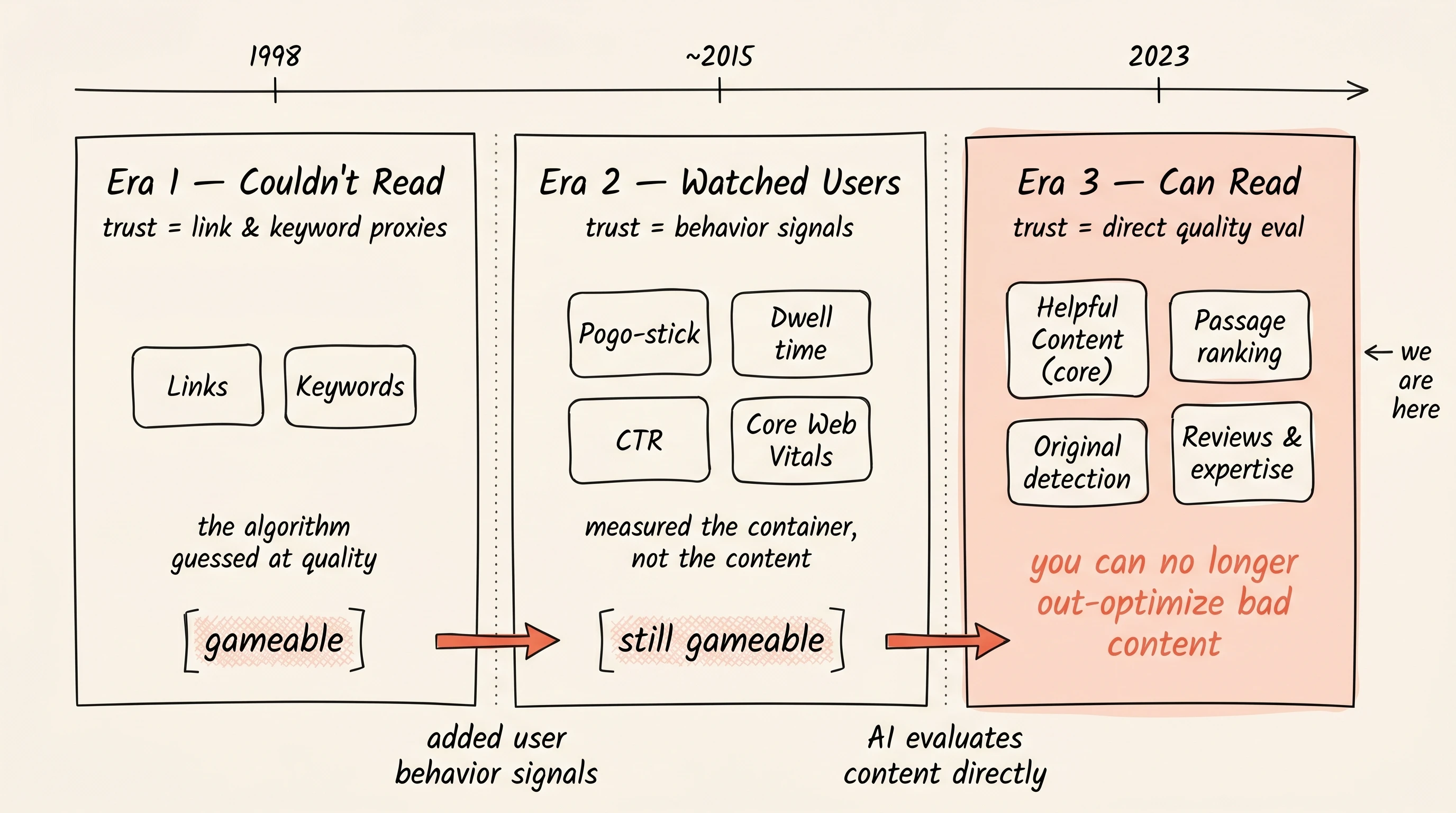

That evolution happened in three distinct eras. Each era had its own definition of "trust," its own optimization playbook, and its own set of tricks that worked until they didn't. Understanding the eras tells you not just what to do today, but why. And what's likely to work tomorrow.

Era 1: The Algorithm Couldn't Read (1998 to 2015)

Google's original breakthrough was PageRank: the idea that links are votes. If reputable sites link to you, you're probably good. This was genuinely clever, but it was a proxy for quality. Google couldn't actually read your page and judge whether the content was any good. It could only count signals around it.

Two signals dominated:

Links. More links from better sites = higher trust. This was the primary ranking signal for over a decade. It worked because earning links from reputable sites was genuinely hard and correlated with quality. But it was gameable. Link farms, paid links, private blog networks: an entire industry emerged around manufacturing the proxy instead of earning the real thing.

Keyword matching. If the words on your page matched the search query, you were relevant. Early Google was a sophisticated keyword matcher. This led to keyword stuffing, exact-match domains, and the "optimize for keywords" mindset that still haunts SEO advice today. Google now says their language matching is sophisticated enough that you don't need exact keyword terms. That's an admission that the old system was keyword-dependent.

The Era 1 playbook: Build links. Stuff keywords. The algorithm couldn't tell the difference between a great page and a well-packaged mediocre one, so the packaging was the job.

Era 2: The Algorithm Watched Users (~2015 to 2023)

Google got smarter but still couldn't evaluate content quality directly. So it added a second layer of proxies: user behavior signals.

Now it wasn't just "who links to you?" but "what do people do when they land on your page?"

Pogo-sticking. User clicks your result, immediately hits back, clicks a different result. Google reads this as: your page didn't answer the question. Pages with high pogo-sticking rates get demoted. This was Google's closest approximation to "this content is bad," but it's still indirect. The content might be great but slow to load, or the title was misleading.

Dwell time and engagement. How long does the user stay? Longer presumably means more engaged. But someone might stay long because they're confused. Or leave quickly because they got a perfect, fast answer.

Click-through rate. If your result gets clicked more than others at the same position, it must be more appealing. This spawned the title tag optimization industry: writing emotional, clickbait-adjacent titles to game CTR.

Core Web Vitals. Loading speed, interactivity, visual stability. Google couldn't measure content quality, so it measured content delivery quality. This was reasonable but led to obsessing over milliseconds. Google itself now says a perfect CWV score isn't worth your time for SEO purposes alone.

The Era 2 limitation: All these signals measure the container, not the content. A beautifully designed page with garbage content could still rank if users stayed long enough. The SEO industry was built around gaming these proxies, because quality itself was still invisible to the algorithm.

Era 3: The Algorithm Can Read (2023 to now)

This is the shift that changes everything.

Helpful Content became core ranking. Google's Helpful Content system is no longer a separate filter. It's baked into core ranking. The AI evaluates whether your content is actually helpful at the ranking level, not as an afterthought penalty.

Passage ranking. Google evaluates individual sections of a page independently. It doesn't just score the page as a whole. It reads each section and decides if that section answers a specific query. This is reading comprehension applied to ranking.

Original content detection. Google can identify who published something first and rank originals prominently ahead of those who merely cite it. It can distinguish the source from the summarizer.

Reviews and expertise systems. Dedicated ranking systems that reward insightful analysis and original research, written by people who actually know the topic. The algorithm can now distinguish genuine expertise from generic summaries.

User signals still matter, but as validation, not primary evaluation. The AI assesses quality first. User behavior confirms or corrects that assessment. Pogo-sticking still happens, but now it's "the AI thought this was good, do users agree?" rather than "we can't tell if this is good, let's see what users do."

The Era 3 reality: You can no longer out-optimize bad content. In Era 1-2, a mediocre article with great links and a fast page could beat a great article with poor optimization. Now, two articles with identical optimization get ranked by content quality. And quality is finally visible to the machine.

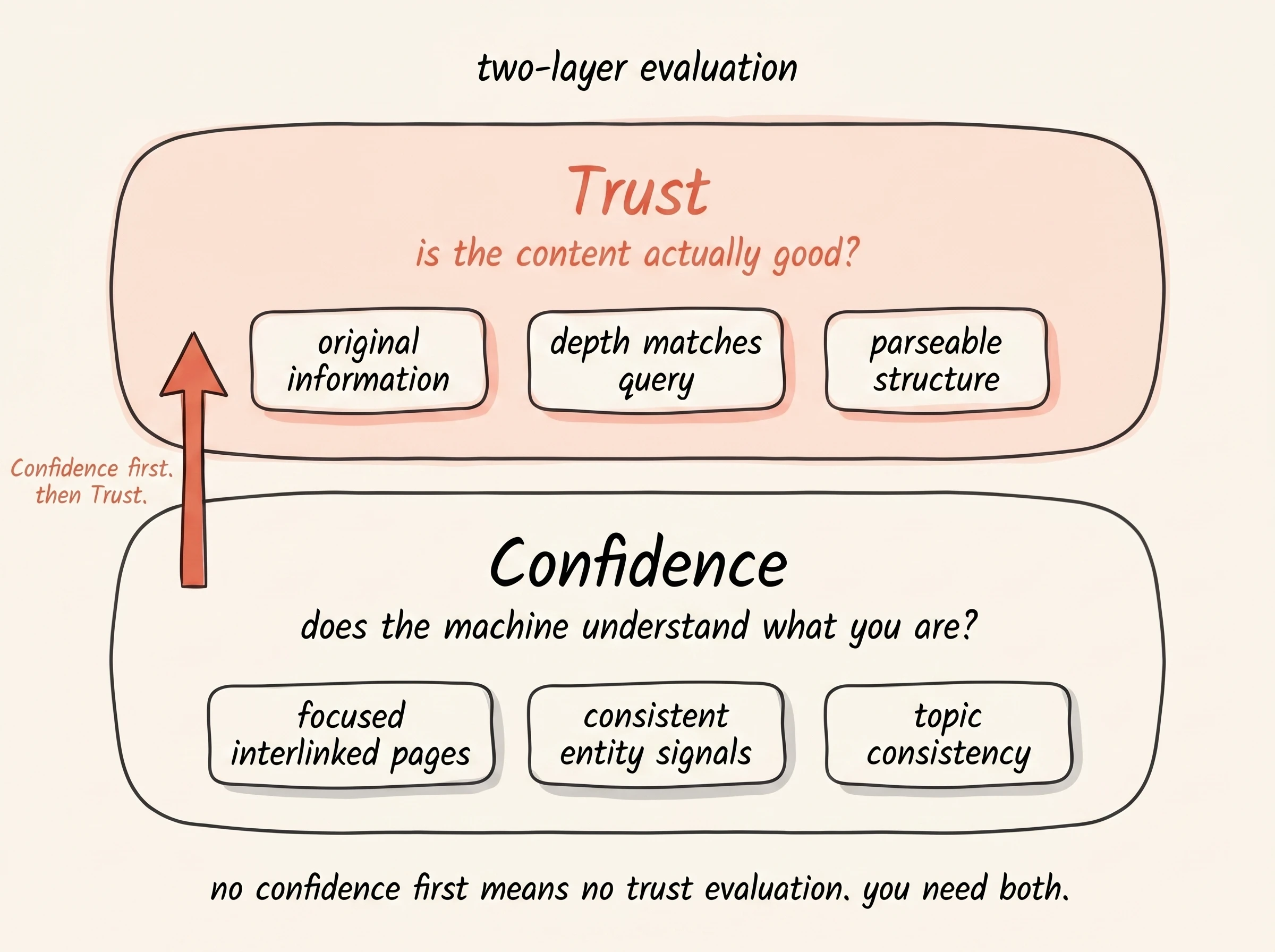

Two Layers: Confidence and Trust

The three eras explain how search evolved. But how does it actually work today? Based on Google's own documentation, AI search insiders, and patterns across the industry, two sequential layers emerge:

Layer 1: Confidence. "Does the machine understand what you are?"

Before the algorithm can trust you, it needs to understand you. This is the prerequisite. Without confidence, trust evaluation doesn't happen.

What builds confidence:

Focused, interlinked pages, one topic each. The machine doesn't have to guess what you're about. A 600-word page titled "What is Topic X?" with a clear answer and links to related pages = high confidence. Topic X buried in section seven of a 4,000-word guide = low confidence. Many small pages beat few large ones for this reason.

Consistent signals across the web. Your brand, your author, your subject matter, and your content benefit from being seen living together across platforms, not just claimed on your About page. Social profiles, reviews, community presence, consistent naming. The machine cross-references where it can.

Topic consistency. You cover related topics that make sense together. A boat seller writing about boats = high confidence. A boat seller randomly writing about cryptocurrency = confusing signal. Finish a cluster before starting the next.

Layer 2: Trust. "Is the content actually good?"

Once the machine understands what you are, it evaluates whether your content deserves trust. This is where the three eras converge. Links still contribute (Era 1 never fully died). User signals still validate (Era 2). And now there's a direct quality assessment layer on top (Era 3).

Trust in 2026 comes from:

- Saying something the machine hasn't seen before. Original data, original frameworks, original experience. Rehashing existing content = low trust. The machine can compare.

- Depth that matches the query's complexity. Not word count. Actual informational depth. A 400-word page that definitively answers a simple question outranks a 3,000-word page that buries the answer.

- Consistency between what you claim and what the web says about you. AI cross-references your positioning against reviews, mentions, and sentiment across the web. You can't call yourself "the dependable option" if people are complaining.

- Structure that machines can parse. Clear headings, answers upfront, quotable key points. A working test: can each section's core point be stated in two sentences without losing context? If yes, an AI system can extract it cleanly. If no, it skips. This isn't just AI optimization. The cool thing is, the same clarity helps every reader.

The link between layers: Confidence without trust means the machine understands you but doesn't recommend you. Trust without confidence means your content might be great but the machine can't place you. You need both.

Our position: For 25 years, content quality was invisible to algorithms. They could only measure proxies (links, clicks, page speed). Now AI systems evaluate quality directly. This is the single biggest shift in content marketing history. It means the old playbook (write good enough content, then out-optimize competitors) no longer works. The new playbook: plan and create content that is genuinely, measurably better. And whenever possible, add your own twist, insight, or experience. That's the part the machine cannot replicate.

What This Means for You

If you're starting fresh: build confidence first.

Pick one topic area. Cover it thoroughly with focused, interlinked pages.

Make sure your brand signals are consistent across platforms. Don't scatter across unrelated topics hoping something sticks.

If you're established but plateauing: you probably have confidence but weak trust. Look at your content honestly. Is it genuinely better than what already ranks? Does it say something new? Or is it well-packaged average?

In Era 3, the machine can tell.

If you're chasing the latest optimization trick: stop.

Every SEO tactic in history has had a shelf life. The only things that compound are depth, clarity, and genuine quality. Those aren't tricks. They're the real thing the tricks were trying to fake.